Choose the Right Text Pattern Tool: Regex, Pregex, or Pyparsing

Table of Contents

Introduction

Dataset Generation

Simple Regex: Basic Pattern Extraction

pregex: Build Readable Patterns

pyparsing: Parse Structured Ticket Headers

Conclusion

Introduction

Imagine you’re analyzing customer support tickets to extract contact information and error details. Tickets contain customer messages with email addresses in various formats, phone numbers with inconsistent formatting (some (555) 123-4567, others 555-123-4567).

ticket_id

message

0

Contact me at nichole70@kemp.com or (798)034-325 to resolve this issue.

1

You can reach me by phone (970-295-1452) or email (russellbrandon@simon-rogers.org) anytime.

2

My contact details: ehamilton@silva.io and 242 844 7293.

3

Feel free to call 901.794.1337 or email ogarcia@howell-chavez.net for assistance.

How do you extract the email addresses and phone numbers from the tickets?

This article shows three approaches to text pattern matching: regex, pregex, and pyparsing.

Stay Current with CodeCut

Actionable Python tips, curated for busy data pros. Skim in under 2 minutes, three times a week.

.codecut-subscribe-form .codecut-input {

background: #2F2D2E !important;

border: 1px solid #72BEFA !important;

color: #FFFFFF !important;

}

.codecut-subscribe-form .codecut-input::placeholder {

color: #999999 !important;

}

.codecut-subscribe-form .codecut-subscribe-btn {

background: #72BEFA !important;

color: #2F2D2E !important;

}

.codecut-subscribe-form .codecut-subscribe-btn:hover {

background: #5aa8e8 !important;

}

.codecut-subscribe-form {

max-width: 650px;

display: flex;

flex-direction: column;

gap: 8px;

}

.codecut-input {

-webkit-appearance: none;

-moz-appearance: none;

appearance: none;

background: #FFFFFF;

border-radius: 8px !important;

padding: 8px 12px;

font-family: ‘Comfortaa’, sans-serif !important;

font-size: 14px !important;

color: #333333;

border: none !important;

outline: none;

width: 100%;

box-sizing: border-box;

}

input[type=”email”].codecut-input {

border-radius: 8px !important;

}

.codecut-input::placeholder {

color: #666666;

}

.codecut-email-row {

display: flex;

align-items: stretch;

height: 36px;

gap: 8px;

}

.codecut-email-row .codecut-input {

flex: 1;

}

.codecut-subscribe-btn {

background: #72BEFA;

color: #2F2D2E;

border: none;

border-radius: 8px;

padding: 8px 14px;

font-family: ‘Comfortaa’, sans-serif;

font-size: 14px;

font-weight: 500;

cursor: pointer;

text-decoration: none;

display: flex;

align-items: center;

justify-content: center;

transition: background 0.3s ease;

}

.codecut-subscribe-btn:hover {

background: #5aa8e8;

}

.codecut-subscribe-btn:disabled {

background: #999;

cursor: not-allowed;

}

.codecut-message {

font-family: ‘Comfortaa’, sans-serif;

font-size: 12px;

padding: 8px;

border-radius: 6px;

display: none;

}

.codecut-message.success {

background: #d4edda;

color: #155724;

display: block;

}

/* Mobile responsive */

@media (max-width: 480px) {

.codecut-email-row {

flex-direction: column;

height: auto;

gap: 8px;

}

.codecut-input {

border-radius: 8px;

height: 36px;

}

.codecut-subscribe-btn {

width: 100%;

text-align: center;

border-radius: 8px;

height: 36px;

}

}

Subscribe

Key Takeaways

Here’s what you’ll learn:

Understand when regex patterns are sufficient and when they fall short

Write maintainable text extraction code using pregex’s readable components

Parse structured text with inconsistent formatting using pyparsing

💻 Get the Code: The complete source code and Jupyter notebook for this tutorial are available on GitHub. Clone it to follow along!

Dataset Generation

Let’s create sample datasets that will be used throughout the article. We’ll generate customer support ticket data using the Faker library:

Install Faker:

pip install faker

First, let’s generate customer support tickets with simple contact information:

from faker import Faker

import csv

import pandas as pd

import random

fake = Faker()

Faker.seed(40)

# Define phone patterns

phone_patterns = ["(###)###-####", "###-###-####", "### ### ####", "###.###.####"]

# Define email TLDs

email_tlds = [".com", ".org", ".io", ".net"]

# Generate phone numbers and emails

phones = []

emails = []

for i in range(4):

# Generate phone with specific pattern

phone = fake.numerify(text=phone_patterns[i])

phones.append(phone)

# Generate email with specific TLD

email = fake.user_name() + "@" + fake.domain_word() + email_tlds[i]

emails.append(email)

# Define sentence structures

sentence_structures = [

lambda p, e: f"Contact me at {e} or {p} to resolve this issue.",

lambda p, e: f"You can reach me by phone ({p}) or email ({e}) anytime.",

lambda p, e: f"My contact details: {e} and {p}.",

lambda p, e: f"Feel free to call {p} or email {e} for assistance."

]

# Create CSV with 4 rows

with open("data/tickets.csv", "w", newline="") as f:

writer = csv.writer(f)

writer.writerow(["ticket_id", "message"])

for i in range(4):

message = sentence_structures[i](phones[i], emails[i])

writer.writerow([i, message])

Set the display option to show the full width of the columns:

pd.set_option("display.max_colwidth", None)

Load and preview the tickets dataset:

df_tickets = pd.read_csv("data/tickets.csv")

df_tickets.head()

ticket_id

message

0

Contact me at nichole70@kemp.com or (798)034-325 to resolve this issue.

1

You can reach me by phone (970-295-1452) or email (russellbrandon@simon-rogers.org) anytime.

2

My contact details: ehamilton@silva.io and 242 844 7293.

3

Feel free to call 901.794.1337 or email ogarcia@howell-chavez.net for assistance.

Simple Regex: Basic Pattern Extraction

Regular expressions (regex) are patterns that match text based on rules. They excel at finding structured data like emails, phone numbers, and dates in unstructured text.

Extract Email Addresses

Start with a simple pattern that matches basic email formats, including:

Username: [a-z]+ – One or more lowercase letters (e.g. maria95)

Separator: @ – Literal @ symbol

Domain: [a-z]+ – One or more lowercase letters (e.g. gmail or outlook)

Dot: \. – Literal dot (escaped)

Extension: (?:org|net|com|io) – Match specific extensions (e.g. .com, .org, .io, .net)

import re

# Match basic email format: letters@domain.extension

email_pattern = r'[a-z]+@[a-z]+\.(?:org|net|com|io)'

df_tickets['emails'] = df_tickets['message'].apply(

lambda x: re.findall(email_pattern, x)

)

df_tickets[['message', 'emails']].head()

message

emails

0

Contact me at nichole70@kemp.com or (798)034-325 to resolve this issue.

1

You can reach me by phone (970-295-1452) or email (russellbrandon@simon-rogers.org) anytime.

2

My contact details: ehamilton@silva.io and 242 844 7293.

3

Feel free to call 901.794.1337 or email ogarcia@howell-chavez.net for assistance.

This pattern works for simple emails but misses variations with:

Other characters in the username such as numbers, dots, underscores, plus signs, or hyphens

Other characters in the domain such as numbers, dots, or hyphens

Other extensions that are not .com, .org, .io, or .net

Let’s expand the pattern to handle more formats:

# Handle emails with numbers, dots, underscores, hyphens, plus signs

improved_email = r'[a-zA-Z0-9._%+-]+@[a-zA-Z0-9.-]+\.[a-zA-Z]{2,}'

df_tickets['emails_improved'] = df_tickets['message'].apply(

lambda x: re.findall(improved_email, x)

)

df_tickets[['message', 'emails_improved']].head()

message

emails_improved

0

Contact me at nichole70@kemp.com or (798)034-325 to resolve this issue.

1

You can reach me by phone (970-295-1452) or email (russellbrandon@simon-rogers.org) anytime.

2

My contact details: ehamilton@silva.io and 242 844 7293.

3

Feel free to call 901.794.1337 or email ogarcia@howell-chavez.net for assistance.

The improved pattern successfully extracts all emails from the tickets! Let’s move on to extracting phone numbers.

Extract Phone Numbers

Common phone number formats are:

(XXX)XXX-XXXX – With parentheses

XXX-XXX-XXXX – Without parentheses

XXX XXX XXXX – With spaces

XXX.XXX.XXXX – With dots

To handle all four phone formats, we can use the following pattern:

\(? – Optional opening parenthesis

\d{3} – Exactly 3 digits (area code)

[-.\s]? – Optional hyphen, dot, or space

\)? – Optional closing parenthesis

\d{3} – Exactly 3 digits (prefix)

[-.\s]? – Optional hyphen, dot, or space

\d{3,4} – Exactly 3 or 4 digits

# Define phone pattern

phone_pattern = r'\(?\d{3}\)?[-.\s]?\d{3}[-.\s]\d{4}'

df_tickets['phones'] = df_tickets['message'].apply(

lambda x: re.findall(phone_pattern, x)

)

df_tickets[['message', 'phones']].head()

message

phones

0

Contact me at hfuentes@anderson.com or (798)034-3254 to resolve this issue.

1

You can reach me by phone (702-951-4528) or email (russellbrandon@simon-rogers.org) anytime.

2

My contact details: ehamilton@silva.io and 242 844 7293.

3

Feel free to call 901.794.1337 or email ogarcia@howell-chavez.net for assistance.

Awesome! We are able to extract all phone numbers from the tickets!

While these patterns works, they are difficult to understand and modify for someone who is not familiar with regex.

📖 Readable code reduces maintenance burden and improves team productivity. Check out Production-Ready Data Science for detailed guidance on writing production-quality code.

In the next section, we will use pregex to build more readable patterns.

pregex: Build Readable Patterns

pregex is a Python library that lets you build regex patterns using readable Python syntax instead of regex symbols. It breaks complex patterns into self-documenting components that clearly express validation logic.

Install pregex:

pip install pregex

Extract Email Addresses

Let’s extract emails using pregex’s readable components.

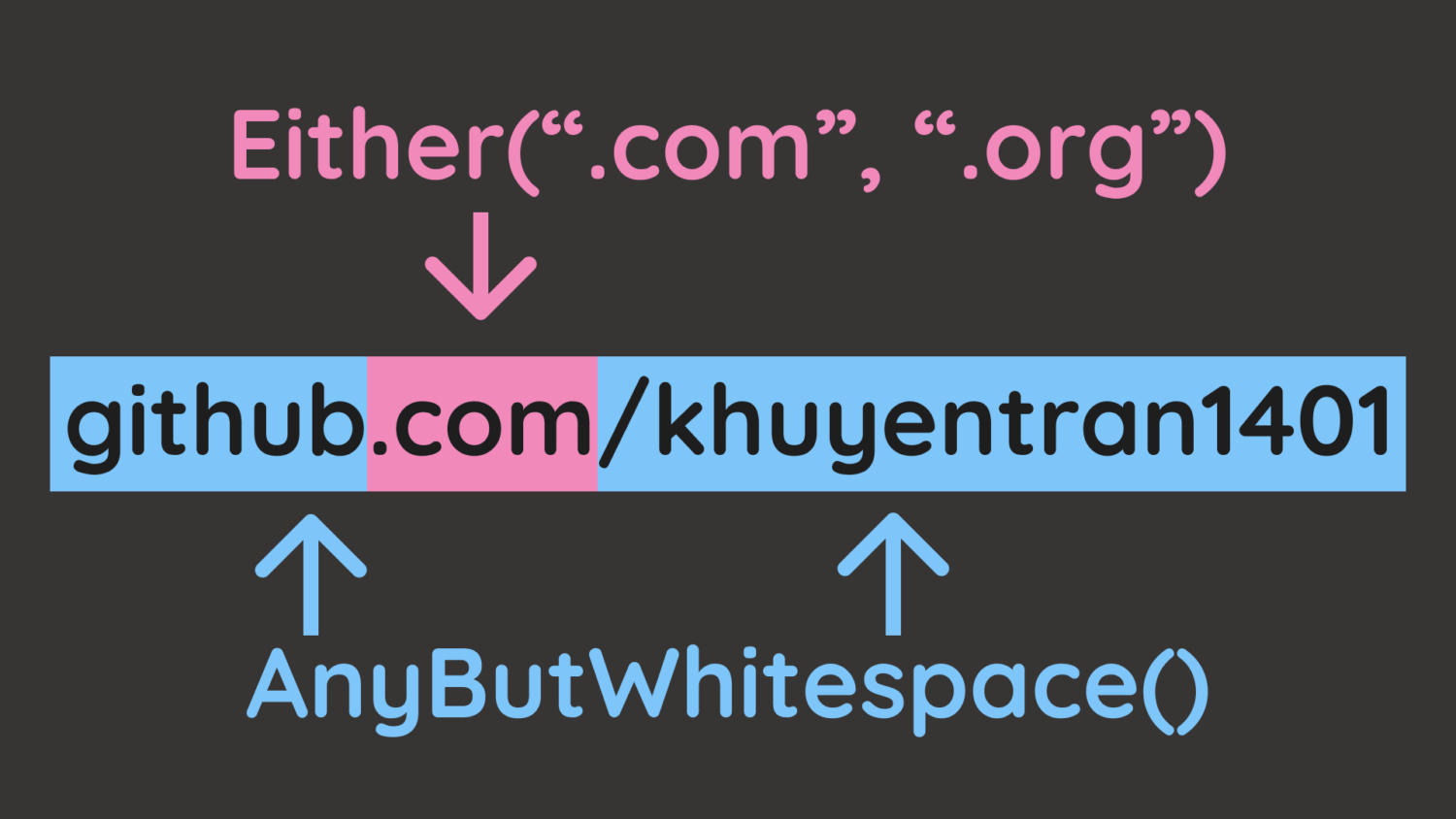

In the code, we will use the following components:

Username: OneOrMore(AnyButWhitespace()) – Any letters but whitespace (maria95)

Separator: @ – Literal @ symbol

Domain name: OneOrMore(AnyButWhitespace()) – Any letters but whitespace (gmail or outlook)

Extension: Either(".com", ".org", ".io", ".net") – Match specific extensions (.com, .org, .io, .net)

from pregex.core.classes import AnyButWhitespace

from pregex.core.quantifiers import OneOrMore

from pregex.core.operators import Either

username = OneOrMore(AnyButWhitespace())

at_symbol = "@"

domain_name = OneOrMore(AnyButWhitespace())

extension = Either(".com", ".org", ".io", ".net")

email_pattern = username + at_symbol + domain_name + extension

# Extract emails

df_tickets["emails_pregex"] = df_tickets["message"].apply(

lambda x: email_pattern.get_matches(x)

)

df_tickets[["message", "emails_pregex"]].head()

message

emails_pregex

0

Contact me at hfuentes@anderson.com or (798)034-3254 to resolve this issue.

[hfuentes@anderson.com]

1

You can reach me by phone (702-951-4528) or email (russellbrandon@simon-rogers.org) anytime.

[(russellbrandon@simon-rogers.org]

2

My contact details: ehamilton@silva.io and 242 844 7293.

[ehamilton@silva.io]

3

Feel free to call 901.794.1337 or email ogarcia@howell-chavez.net for assistance.

[ogarcia@howell-chavez.net]

The output shows that we are able to extract the emails from the tickets!

pregex transforms pattern matching from symbol decoding into readable code. OneOrMore(username_chars) communicates intent more clearly than [a-zA-Z0-9._%+-]+, reducing the time teammates spend understanding and modifying validation logic.

Extract Phone Numbers

Now extract phone numbers with multiple components:

First three digits: Optional("(") + Exactly(AnyDigit(), 3) + Optional(")")

Separator: Either(" ", "-", ".")

Second three digits: Exactly(AnyDigit(), 3)

Last four digits: Exactly(AnyDigit(), 4)

from pregex.core.classes import AnyDigit

from pregex.core.quantifiers import Optional, Exactly

from pregex.core.operators import Either

# Build phone pattern using pregex

first_three = Optional("(") + Exactly(AnyDigit(), 3) + Optional(")")

separator = Either(" ", "-", ".")

second_three = Exactly(AnyDigit(), 3)

last_four = Exactly(AnyDigit(), 4)

phone_pattern = first_three + Optional(separator) + second_three + separator + last_four

# Extract phone numbers

df_tickets['phones_pregex'] = df_tickets['message'].apply(

lambda x: phone_pattern.get_matches(x)

)

df_tickets[['message', 'phones_pregex']].head()

message

phones_pregex

0

Contact me at hfuentes@anderson.com or (798)034-3254 to resolve this issue.

[(798)034-3254]

1

You can reach me by phone (702-951-4528) or email (russellbrandon@simon-rogers.org) anytime.

[(702-951-4528]

2

My contact details: ehamilton@silva.io and 242 844 7293.

[242 844 7293]

3

Feel free to call 901.794.1337 or email ogarcia@howell-chavez.net for assistance.

[901.794.1337]

If your system requires the raw regex pattern, you can get it with get_compiled_pattern():

print("Compiled email pattern:", email_pattern.get_compiled_pattern().pattern)

print("Compiled phone pattern:", phone_pattern.get_compiled_pattern().pattern)

Compiled email pattern: \S+@\S+(?:\.com|\.org|\.io|\.net)

Compiled phone pattern: \(?\d{3}\)?(?: |-|\.)?\d{3}(?: |-|\.)\d{4}

For more pregex examples including URLs and time patterns, see PRegEx: Write Human-Readable Regular Expressions in Python.

Parse Structured Ticket Headers

Now let’s tackle a more complex task: parsing structured ticket headers that contain multiple fields:

Ticket: 1000 | Priority: High | Assigned: John Doe # escalated

We will use Capture to extract just the values we need from each ticket:

from pregex.core.quantifiers import OneOrMore

from pregex.core.classes import AnyDigit, AnyLetter, AnyWhitespace

from pregex.core.groups import Capture

sample_ticket = "Ticket: 1000 | Priority: High | Assigned: John Doe # escalated"

# Define patterns with Capture to extract just the values

whitespace = AnyWhitespace()

ticket_id_pattern = "Ticket:" + whitespace + Capture(OneOrMore(AnyDigit()))

priority_pattern = "Priority:" + whitespace + Capture(OneOrMore(AnyLetter()))

name_pattern = (

"Assigned:"

+ whitespace

+ Capture(OneOrMore(AnyLetter()) + " " + OneOrMore(AnyLetter()))

)

# Define separator pattern (whitespace around pipe)

separator = whitespace + "|" + whitespace

# Combine all patterns with separators

ticket_pattern = (

ticket_id_pattern

+ separator

+ priority_pattern

+ separator

+ name_pattern

)

“`text

Next, define a function to extract the ticket components from the captured components:

“`python

def get_ticket_components(ticket_string, ticket_pattern):

"""Extract ticket components from a ticket string."""

try:

captures = ticket_pattern.get_captures(ticket_string)[0]

return pd.Series(

{

"ticket_id": captures[0],

"priority": captures[1],

"assigned": captures[2],

}

)

except IndexError:

return pd.Series(

{"ticket_id": None, "priority": None, "assigned": None}

)

Apply the function with the pattern defined above to the sample ticket.

components = get_ticket_components(sample_ticket, ticket_pattern)

print(components.to_dict())

{'ticket_id': '1000', 'priority': 'High', 'assigned': 'John Doe'}

This looks good! Let’s apply to ticket headers with inconsistent whitespace around the separators. Start by creating the dataset:

import pandas as pd

# Create tickets with embedded comments and variable whitespace

tickets = [

"Ticket: 1000 | Priority: High | Assigned: John Doe # escalated",

"Ticket: 1001 | Priority: Medium | Assigned: Maria Garcia # team lead",

"Ticket:1002| Priority:Low |Assigned:Alice Smith # non-urgent",

"Ticket: 1003 | Priority: High | Assigned: Bob Johnson # on-call"

]

df_tickets = pd.DataFrame({'ticket': tickets})

df_tickets.head()

ticket

0

1

2

3

# Extract individual components using the function

df_pregex = df_tickets.copy()

components_df = df_pregex["ticket"].apply(get_ticket_components, ticket_pattern=ticket_pattern)

df_pregex = df_pregex.assign(**components_df)

df_pregex[["ticket_id", "priority", "assigned"]].head()

ticket_id

priority

assigned

0

1000

High

John Doe

1

None

None

None

2

None

None

None

3

1003

High

Bob Johnson

We can see that pregex misses Tickets 1 and 2 because AnyWhitespace() only matches a single space, while those rows use inconsistent spacing around the separators.

Making pregex patterns flexible enough for variable formatting requires adding optional quantifiers to the whitespace pattern so that it can match zero or more spaces around the separators.

As these fixes accumulate, pregex’s readability advantage diminishes, and you end up with code that’s as hard to understand as raw regex but more verbose.

When parsing structured data with consistent patterns but varying details, pyparsing provides more robust handling than regex.

pyparsing: Parse Structured Ticket Headers

Unlike regex’s pattern matching approach, pyparsing lets you define grammar rules using Python classes, making parsing logic explicit and maintainable.

Install pyparsing:

pip install pyparsing

Let’s parse the complete structure with pyparsing, including:

Ticket ID: Word(nums) – One or more digits (e.g. 1000)

Priority: Word(alphas) – One or more letters (e.g. High)

Name: Word(alphas) + Word(alphas) – First and last name (e.g. John Doe)

We will also use the pythonStyleComment to ignore Python-style comments throughout parsing.

from pyparsing import Word, alphas, nums, Literal, pythonStyleComment

# Define grammar components

ticket_num = Word(nums)

priority = Word(alphas)

name = Word(alphas) + Word(alphas)

# Define complete structure

ticket_grammar = (

"Ticket:"

+ ticket_num

+ "|"

+ "Priority:"

+ priority

+ "|"

+ "Assigned:"

+ name

)

# Automatically ignore Python-style comments throughout parsing

ticket_grammar.ignore(pythonStyleComment)

sample_ticket = "Ticket: 1000 | Priority: High | Assigned: John Doe # escalated"

sample_result = ticket_grammar.parse_string(sample_ticket)

print(sample_result)

['Ticket:', '1000', '|', 'Priority:', 'High', '|', 'Assigned:', 'John', 'Doe']

Awesome! We are able to extract the ticket components from the ticket with a much simpler pattern!

Compare this to the pregex implementation:

ticket_pattern = (

"Ticket:" + whitespace + Capture(OneOrMore(AnyDigit()))

+ whitespace + "|" + whitespace

+ "Priority:" + whitespace + Capture(OneOrMore(AnyLetter()))

+ whitespace + "|" + whitespace

+ "Assigned:"

+ whitespace

+ Capture(OneOrMore(AnyLetter()) + " " + OneOrMore(AnyLetter()))

)

We can see that pyparsing handles structured data better than pregex for the following reasons:

No whitespace boilerplate: pyparsing handles spacing automatically while pregex requires + whitespace + between every component

Self-documenting: Word(alphas) clearly means “letters” while pregex’s nested Capture(OneOrMore(AnyLetter())) is less readable

To extract ticket components, assign names using () syntax and access them via dot notation:

# Define complete structure

ticket_grammar = (

"Ticket:"

+ ticket_num("ticket_id")

+ "|"

+ "Priority:"

+ priority("priority")

+ "|"

+ "Assigned:"

+ name("assigned")

)

# Automatically ignore Python-style comments throughout parsing

ticket_grammar.ignore(pythonStyleComment)

sample_ticket = "Ticket: 1000 | Priority: High | Assigned: John Doe # escalated"

sample_result = ticket_grammar.parse_string(sample_ticket)

# Access the components by name

print(

f"Ticket ID: {sample_result.ticket_id}",

f"Priority: {sample_result.priority}",

f"Assigned: {' '.join(sample_result.assigned)}",

)

Ticket ID: 1000 Priority: High Assigned: John Doe

Let’s apply this to the entire dataset.

# Parse all tickets and create columns

def parse_ticket(ticket, ticket_grammar):

result = ticket_grammar.parse_string(ticket)

return pd.Series(

{

"ticket_id": result.ticket_id,

"priority": result.priority,

"assigned": " ".join(result.assigned),

}

)

df_pyparsing = df_tickets.copy()

components_df_pyparsing = df_pyparsing["ticket"].apply(parse_ticket, ticket_grammar=ticket_grammar)

df_pyparsing = df_pyparsing.assign(**components_df_pyparsing)

df_pyparsing[["ticket_id", "priority", "assigned"]].head()

ticket_id

priority

assigned

0

1000

High

John Doe

1

1001

Medium

Maria Garcia

2

1002

Low

Alice Smith

3

1003

High

Bob Johnson

The output looks good!

Let’s try to parse some more structured data with pyparsing.

Extract Code Blocks from Markdown

Use SkipTo to extract Python code between code block markers without complex regex patterns like r'“`python(.*?)“`':

from pyparsing import Literal, SkipTo

code_start = Literal("“`python")

code_end = Literal("“`")

code_block = code_start + SkipTo(code_end)("code") + code_end

markdown = """“`python

def hello():

print("world")

“`"""

result = code_block.parse_string(markdown)

print(result.code)

def hello():

print("world")

Parse Nested Structures

nested_expr handles arbitrary nesting depth, which regex fundamentally cannot parse:

from pyparsing import nested_expr

# Default: parentheses

nested_list = nested_expr()

result = nested_list.parse_string("((2 + 3) * (4 – 1))")

print(result.as_list())

[[['2', '+', '3'], '*', ['4', '-', '1']]]

Conclusion

So how do you know when to use each tool? Choose your tool based on your needs:

Use simple regex when:

Extracting simple, well-defined patterns (emails, phone numbers with consistent format)

Pattern won’t need frequent modifications

Use pregex when:

Pattern has multiple variations (different phone number formats)

Need to document pattern logic through readable code

Use pyparsing when:

Need to extract multiple fields from structured text (ticket headers, configuration files)

Must handle variable formatting (inconsistent whitespace, embedded comments)

In summary, start with simple regex, adopt pregex when readability matters, and switch to pyparsing when structure becomes complex.

Related Tutorials

Here are some related text processing tools:

Text similarity matching: 4 Text Similarity Tools: When Regex Isn’t Enough compares regex preprocessing, difflib, RapidFuzz, and Sentence Transformers for matching product names and handling data variations

Business entity extraction: langextract vs spaCy: AI-Powered vs Rule-Based Entity Extraction evaluates regex, spaCy, GLiNER, and langextract for extracting structured information from financial documents

📚 Want to go deeper? Learning new techniques is the easy part. Knowing how to structure, test, and deploy them is what separates side projects from real work. My book shows you how to build data science projects that actually make it to production. Get the book →

Stay Current with CodeCut

Actionable Python tips, curated for busy data pros. Skim in under 2 minutes, three times a week.

.codecut-subscribe-form .codecut-input {

background: #2F2D2E !important;

border: 1px solid #72BEFA !important;

color: #FFFFFF !important;

}

.codecut-subscribe-form .codecut-input::placeholder {

color: #999999 !important;

}

.codecut-subscribe-form .codecut-subscribe-btn {

background: #72BEFA !important;

color: #2F2D2E !important;

}

.codecut-subscribe-form .codecut-subscribe-btn:hover {

background: #5aa8e8 !important;

}

.codecut-subscribe-form {

max-width: 650px;

display: flex;

flex-direction: column;

gap: 8px;

}

.codecut-input {

-webkit-appearance: none;

-moz-appearance: none;

appearance: none;

background: #FFFFFF;

border-radius: 8px !important;

padding: 8px 12px;

font-family: ‘Comfortaa’, sans-serif !important;

font-size: 14px !important;

color: #333333;

border: none !important;

outline: none;

width: 100%;

box-sizing: border-box;

}

input[type=”email”].codecut-input {

border-radius: 8px !important;

}

.codecut-input::placeholder {

color: #666666;

}

.codecut-email-row {

display: flex;

align-items: stretch;

height: 36px;

gap: 8px;

}

.codecut-email-row .codecut-input {

flex: 1;

}

.codecut-subscribe-btn {

background: #72BEFA;

color: #2F2D2E;

border: none;

border-radius: 8px;

padding: 8px 14px;

font-family: ‘Comfortaa’, sans-serif;

font-size: 14px;

font-weight: 500;

cursor: pointer;

text-decoration: none;

display: flex;

align-items: center;

justify-content: center;

transition: background 0.3s ease;

}

.codecut-subscribe-btn:hover {

background: #5aa8e8;

}

.codecut-subscribe-btn:disabled {

background: #999;

cursor: not-allowed;

}

.codecut-message {

font-family: ‘Comfortaa’, sans-serif;

font-size: 12px;

padding: 8px;

border-radius: 6px;

display: none;

}

.codecut-message.success {

background: #d4edda;

color: #155724;

display: block;

}

/* Mobile responsive */

@media (max-width: 480px) {

.codecut-email-row {

flex-direction: column;

height: auto;

gap: 8px;

}

.codecut-input {

border-radius: 8px;

height: 36px;

}

.codecut-subscribe-btn {

width: 100%;

text-align: center;

border-radius: 8px;

height: 36px;

}

}

Subscribe

Choose the Right Text Pattern Tool: Regex, Pregex, or Pyparsing Read More »