RapidFuzz: Find Similar Strings Despite Typos and Variations

Motivation

When dealing with real-world data, exact string matching often fails to capture similar entries due to typos, inconsistent formatting, or data entry errors. For example, trying to match company names like “Apple Inc.” with “Apple Incorporated” or “APPLE INC” requires more sophisticated matching techniques.

Here’s an example showing the limitations of exact matching:

companies = ["Apple Inc.", "Microsoft Corp.", "Google LLC"]

search_term = "apple incorporated"

# Traditional exact matching

matches = [company for company in companies if company.lower() == search_term.lower()]

print(f"Exact matches: {matches}")

Output:

Exact matches: []

Introduction to RapidFuzz

RapidFuzz is a fast string matching library that provides various similarity metrics for fuzzy string matching. It’s designed as a faster, MIT-licensed alternative to FuzzyWuzzy, with additional string metrics and algorithmic improvements.

Installation is straightforward:

pip install rapidfuzz

Fuzzy String Matching

RapidFuzz provides several methods for fuzzy string matching. Here’s how to use them:

Compare two similar strings:

Simple ratio comparison:

from rapidfuzz import fuzz, process

similarity = fuzz.ratio("Apple Inc.", "APPLE INC")

print(f"Similarity score: {similarity:.3f}")

Output:

Similarity score: 31.579

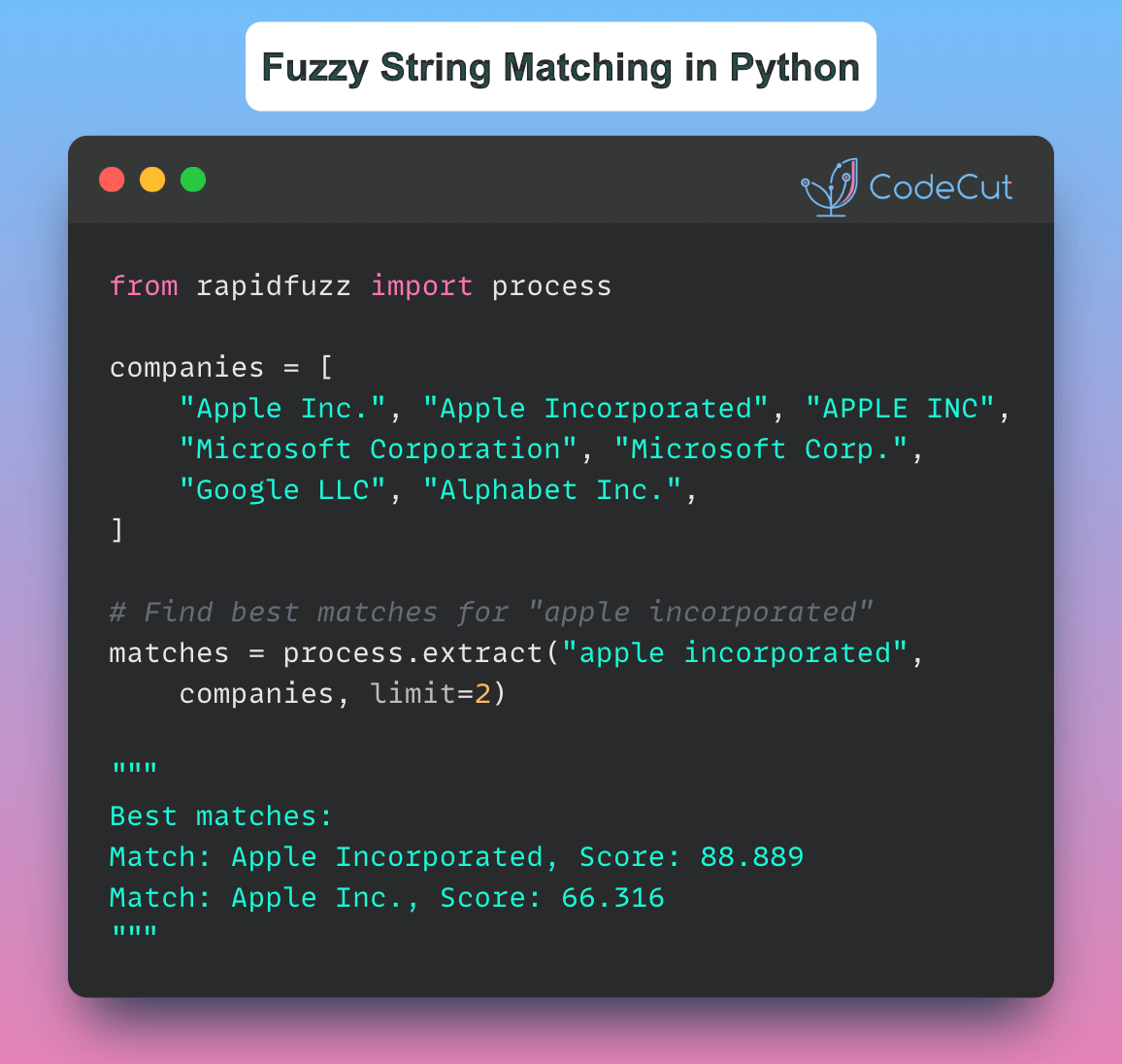

Find best matches from a list:

# Sample company names with variations

companies = [

"Apple Inc.",

"Apple Incorporated",

"APPLE INC",

"Microsoft Corporation",

"Microsoft Corp.",

"Google LLC",

"Alphabet Inc.",

]

# Find best matches for "apple incorporated"

matches = process.extract("apple incorporated", companies, scorer=fuzz.WRatio, limit=2)

print("Best matches:")

for match in matches:

print(f"Match: {match[0]}, Score: {match[1]:.3f}")

Output:

Best matches:

Match: Apple Incorporated, Score: 88.889

Match: Apple Inc., Score: 66.316

RapidFuzz automatically:

Calculates similarity scores between strings

Handles case sensitivity

Provides multiple matching algorithms

Returns confidence scores for matches

Conclusion

RapidFuzz significantly simplifies the process of fuzzy string matching in Python, making it an excellent choice for data scientists and engineers who need to perform efficient and accurate string matching operations.

RapidFuzz: Find Similar Strings Despite Typos and Variations Read More »